python怎樣搭建多層神經(jīng)網(wǎng)絡(luò)?

python怎樣搭建多層神經(jīng)網(wǎng)絡(luò)?這個(gè)問(wèn)題可能是我們?nèi)粘9ぷ鹘?jīng)常見到的。通過(guò)這個(gè)問(wèn)題,希望你能收獲更多。下面是解決這個(gè)問(wèn)題的步驟內(nèi)容。

讓客戶滿意是我們工作的目標(biāo),不斷超越客戶的期望值來(lái)自于我們對(duì)這個(gè)行業(yè)的熱愛。我們立志把好的技術(shù)通過(guò)有效、簡(jiǎn)單的方式提供給客戶,將通過(guò)不懈努力成為客戶在信息化領(lǐng)域值得信任、有價(jià)值的長(zhǎng)期合作伙伴,公司提供的服務(wù)項(xiàng)目有:域名申請(qǐng)、雅安服務(wù)器托管、營(yíng)銷軟件、網(wǎng)站建設(shè)、雨花臺(tái)網(wǎng)站維護(hù)、網(wǎng)站推廣。

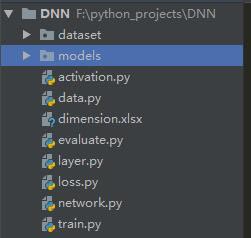

模型的搭建按照自己的想法設(shè)計(jì),源碼共7個(gè).py文件,如下圖:

按照創(chuàng)建先后順序,分別是:data.py,layer.py,network.py,activation.py,loss.py,train.py,evaluate.py。data.py用于獲取數(shù)據(jù)并對(duì)數(shù)據(jù)進(jìn)行預(yù)處理,layer.py創(chuàng)建了一個(gè)Layer類,用來(lái)表示第L層,network.py抽象了一個(gè)網(wǎng)絡(luò)類,將傳入的若干層通過(guò)計(jì)算輸入輸出連接起來(lái),組成一個(gè)網(wǎng)絡(luò),data.py用來(lái)讀取數(shù)據(jù),loss.py明確了交叉熵?fù)p失函數(shù)和其導(dǎo)數(shù),activation.py分別寫了激活函數(shù)relu和sigmoid以及其導(dǎo)函數(shù),train.py創(chuàng)建了層次并組成網(wǎng)絡(luò),然后對(duì)數(shù)據(jù)進(jìn)行訓(xùn)練并保存模型,最后evaluate.py用于對(duì)測(cè)試集進(jìn)行測(cè)試。

網(wǎng)絡(luò)分為2大塊,正向傳播和反向傳播:

但是不管是正向還是反向,網(wǎng)絡(luò)中的每一層都可以抽象出來(lái),因此創(chuàng)建一個(gè)layer類:

正向傳播的L層:

反向傳播的L層:

在寫代碼之前,最重要的是確定每個(gè)變量和參數(shù)的維度:

正向傳播:

注意:n[L]表示當(dāng)前層(即第L層)中的神經(jīng)元個(gè)數(shù),n[L-1]表示前一層(即L-1層)的神經(jīng)元個(gè)數(shù),例如在本次程序中,n[0]=12288,n[1]=1000,n[2]=500,n[3]=1

反向傳播:

1. data.py

# coding: utf-8

# 2019/7/20 18:59

import h6py

import numpy as np

def get_train():

f = h6py.File('dataset/train_catvnoncat.h6','r')

x_train = np.array(f['train_set_x'])#訓(xùn)練集數(shù)據(jù) 將數(shù)據(jù)轉(zhuǎn)化為np.array

y_train = np.array(f['train_set_y'])#訓(xùn)練集標(biāo)簽

return x_train,y_train

def get_test():

f = h6py.File('dataset/test_catvnoncat.h6', 'r')

x_test = np.array(f['test_set_x'])#測(cè)試集數(shù)據(jù) 將數(shù)據(jù)轉(zhuǎn)化為np.array

y_test = np.array(f['test_set_y'])#測(cè)試集標(biāo)簽

return x_test,y_test

def preprocess(X):

#將X標(biāo)準(zhǔn)化,從0-255變成0-1

# X =X / 255

#將數(shù)據(jù)從(m,64,64,3)變成(m,12288)

X = X.reshape([X.shape[0], X.shape[1]*X.shape[2]*X.shape[3]]).T

return X

if __name__ == '__main__':

x1,y1 = get_train()

x2,y2 = get_test()

print(x1.shape,y1.shape)

print(x2.shape,y2.shape)

from matplotlib import pyplot as plt

plt.figure()

for i in range(1,16):

plt.subplot(3,5,i)

plt.imshow(x1[i])

print(y1[i])

plt.show()

2. layer.py

# coding: utf-8

# 2019/7/21 9:22

import numpy as np

class Layer:

def __init__(self,nL,nL_1,activ,activ_deri, learning_rate):

#參數(shù)分別表示:當(dāng)前層神經(jīng)元個(gè)數(shù),前一層神經(jīng)元個(gè)數(shù),激活函數(shù),激活函數(shù)的導(dǎo)函數(shù),學(xué)習(xí)率

self.nL = nL

self.nL_1 = nL_1

self.g = activ

self.g_d = activ_deri

self.alpha = learning_rate

self.W = np.random.randn(nL,nL_1)*0.01

self.b = np.random.randn(nL,1)*0.01

#正向傳播:

#1、計(jì)算Z=WX+b

#2、計(jì)算A=g(Z)

def forward(self,AL_1):

self.AL_1 = AL_1

assert (AL_1.shape[0] == self.nL_1)

self.Z = np.dot(self.W,AL_1)+self.b

assert (self.Z.shape[0] == self.nL)

AL = self.g(self.Z)

return AL

#反向傳播:

#1、m表示樣本個(gè)數(shù)

#2、計(jì)算dZ,dW,db,dAL_1

#3、梯度下降,更新W和b

def backward(self,dAL):

assert (dAL.shape[0] == self.nL)

m = dAL.shape[1]

dZ = np.multiply(dAL,self.g_d(self.Z))

assert (dZ.shape[0] == self.nL)

dW = np.dot(dZ,self.AL_1.T)/m

assert (dW.shape == (self.nL,self.nL_1))

db = np.mean(dZ,axis=1,keepdims=True)

assert (db.shape == (self.nL,1))

dAL_1 = np.dot(self.W.T,dZ)

assert (dAL_1.shape[0] == self.nL_1)

#梯度下降

self.W -= self.alpha*dW

self.b -= self.alpha*db

return dAL_1

3. network.py

# coding: utf-8

# 2019/7/21 10:45

import numpy as np

class Network:

def __init__(self,layers,loss,loss_der):

self.layers = layers

self.loss = loss

self.loss_der = loss_der

#根據(jù)輸入的數(shù)據(jù)來(lái)調(diào)用正向傳播函數(shù),不斷更新A,最后得到預(yù)測(cè)結(jié)果

def predict(self,X):

A = X

for layer in self.layers:

A = layer.forward(A)

return A

#連接每個(gè)層組建網(wǎng)絡(luò):

#1、根據(jù)輸入的數(shù)據(jù)進(jìn)行正向傳播,得到預(yù)測(cè)結(jié)果Y_predict

#2、根據(jù)Y_predict和真實(shí)值Y,通過(guò)損失函數(shù)來(lái)計(jì)算成本值J

#3、根據(jù)J來(lái)計(jì)算反向傳播的輸入值dA

#4、調(diào)用反向傳播函數(shù)來(lái)更新dA

def train(self,X,Y,epochs=10):

for i in range(epochs):

Y_predict = self.predict(X)

J = np.mean(self.loss(Y, Y_predict))

print('epoch %d:loss=%f'%(i,J))

dA = self.loss_der(Y,Y_predict)

for layer in reversed(self.layers):

#更新dA

dA= layer.backward(dA)

4. loss.py

# coding: utf-8

# 2019/7/21 11:34

import numpy as np

#交叉熵?fù)p失函數(shù)

def cross_entropy(y, y_predict):

y_predict = np.clip(y_predict,1e-10,1-1e-10) #防止0*log(0)出現(xiàn)。導(dǎo)致計(jì)算結(jié)果變?yōu)镹aN

return -(y * np.log(y_predict) + (1 - y) * np.log(1 - y_predict))

#交叉熵?fù)p失函數(shù)的導(dǎo)函數(shù)

def cross_entropy_der(y,y_predict):

return -y/y_predict+(1-y)/(1-y_predict)

5. activation.py

# coding: utf-8

# 2019/7/21 9:49

import numpy as np

def sigmoid(z):

return 1 / (1 + np.exp(-z))

#sigmoid導(dǎo)函數(shù)

def sigmoid_der(z):

x = np.exp(-z)

return x/((1+x)**2)

def relu(z):無(wú)錫婦科醫(yī)院 http://www.xasgyy.net/

return np.maximum(0,z)

#relu導(dǎo)函數(shù)

def relu_der(z):

return (z>=0).astype(np.float64)

6. train.py

# coding: utf-8

# 2019/7/21 12:13

import data,layer,loss,network,activation

import pickle,time

#對(duì)數(shù)據(jù)集進(jìn)行訓(xùn)練并保存模型

#1、搭建3層網(wǎng)絡(luò)層

#2、將3個(gè)層組建成網(wǎng)絡(luò)

#3、獲取訓(xùn)練集數(shù)據(jù)

#4、對(duì)輸入值X進(jìn)行預(yù)處理

#5、將數(shù)據(jù)輸入網(wǎng)絡(luò)進(jìn)行訓(xùn)練,epochs為1000

#6、將整個(gè)模型保存

if __name__ == '__main__':

learning_rate = 0.01

L1 = layer.Layer(1000,64*64*3, activation.relu, activation.relu_der, learning_rate)

L2 = layer.Layer(500,1000,activation.relu, activation.relu_der, learning_rate)

L3 = layer.Layer(1,500, activation.sigmoid, activation.sigmoid_der, learning_rate)

net = network.Network([L1,L2,L3], loss.cross_entropy, loss.cross_entropy_der)

X,Y = data.get_train()

X = data.preprocess(X)

net.train(X,Y,1000)

with open('models/model_%s.pickle'%(time.asctime().replace(':','_').replace(' ','-')),'wb') as f:

pickle.dump(net,f)

7. evaluate.py

# coding: utf-8

# 2019/7/21 14:17

import data

import pickle

import numpy as np

if __name__ == '__main__':

model_name = 'model_Sun-Jul-21-14_41_42-2019.pickle'

#導(dǎo)入模型

with open('models/'+model_name,'rb') as f:

net = pickle.load(f)

#獲取測(cè)試數(shù)據(jù)集

X,Y = data.get_test()

X = data.preprocess(X)

#根據(jù)輸入數(shù)據(jù)X進(jìn)行預(yù)測(cè)

Y_predict = net.predict(X)

Y_pred_float = (Y_predict>0.5).astype(np.float64)

#計(jì)算精確度

accuracy = np.sum(np.equal(Y_pred_float,Y).astype(np.int))/Y.shape[0]

print('accuracy:',accuracy)

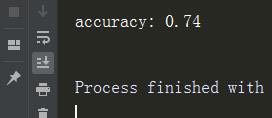

結(jié)果

看完上訴內(nèi)容,你們對(duì)python搭建多層神經(jīng)網(wǎng)絡(luò)大概了解了嗎?如果想了解更多相關(guān)文章內(nèi)容,歡迎關(guān)注創(chuàng)新互聯(lián)行業(yè)資訊頻道,感謝各位的閱讀!

網(wǎng)頁(yè)題目:python怎樣搭建多層神經(jīng)網(wǎng)絡(luò)?

當(dāng)前網(wǎng)址:http://vcdvsql.cn/article48/gjcchp.html

成都網(wǎng)站建設(shè)公司_創(chuàng)新互聯(lián),為您提供網(wǎng)站內(nèi)鏈、營(yíng)銷型網(wǎng)站建設(shè)、企業(yè)網(wǎng)站制作、虛擬主機(jī)、網(wǎng)站設(shè)計(jì)、App開發(fā)

聲明:本網(wǎng)站發(fā)布的內(nèi)容(圖片、視頻和文字)以用戶投稿、用戶轉(zhuǎn)載內(nèi)容為主,如果涉及侵權(quán)請(qǐng)盡快告知,我們將會(huì)在第一時(shí)間刪除。文章觀點(diǎn)不代表本網(wǎng)站立場(chǎng),如需處理請(qǐng)聯(lián)系客服。電話:028-86922220;郵箱:631063699@qq.com。內(nèi)容未經(jīng)允許不得轉(zhuǎn)載,或轉(zhuǎn)載時(shí)需注明來(lái)源: 創(chuàng)新互聯(lián)

- 英文網(wǎng)站制作開發(fā)解決方案 2023-03-27

- 什么是響應(yīng)式網(wǎng)站?響應(yīng)式網(wǎng)站的優(yōu)缺點(diǎn) 2016-11-11

- 成都網(wǎng)站制作淺析美容行業(yè)網(wǎng)站建設(shè)的總體制作規(guī)劃 2016-03-15

- 文章創(chuàng)作規(guī)范與快速收錄的技巧是什么? 2014-07-08

- 成都網(wǎng)站制作角度選擇一款優(yōu)質(zhì)的美國(guó)主機(jī)? 2013-10-19

- 網(wǎng)站制作如何結(jié)合SEO優(yōu)化布局? 2020-08-14

- 淺談移動(dòng)網(wǎng)站建設(shè)的那些要點(diǎn)分析 2017-01-22

- 浦東做網(wǎng)站公司之網(wǎng)站推廣細(xì)節(jié)在網(wǎng)站制作時(shí)怎么體驗(yàn) 2021-07-04

- 企業(yè)網(wǎng)站制作首頁(yè)要注重哪些問(wèn)題? 2023-04-18

- 網(wǎng)站制作完成后,網(wǎng)站上可以發(fā)布哪些信息 2022-09-29

- 網(wǎng)站制作淺談做產(chǎn)品策劃的六個(gè)好習(xí)慣 2021-12-02

- 電子商務(wù)行業(yè)怎么選擇網(wǎng)站開發(fā)公司 2013-07-03